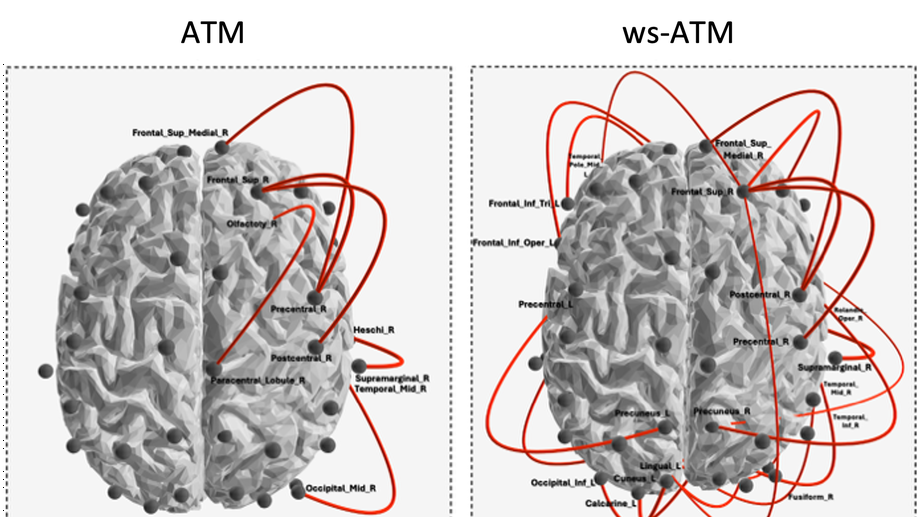

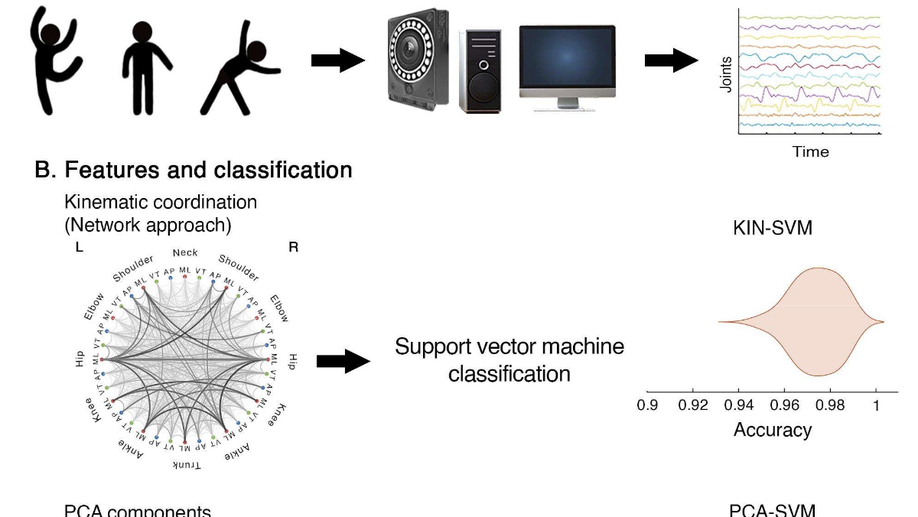

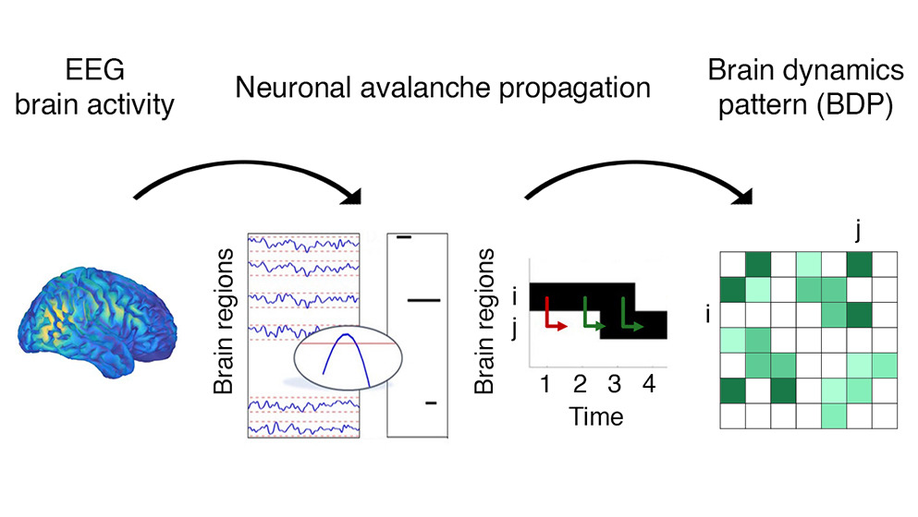

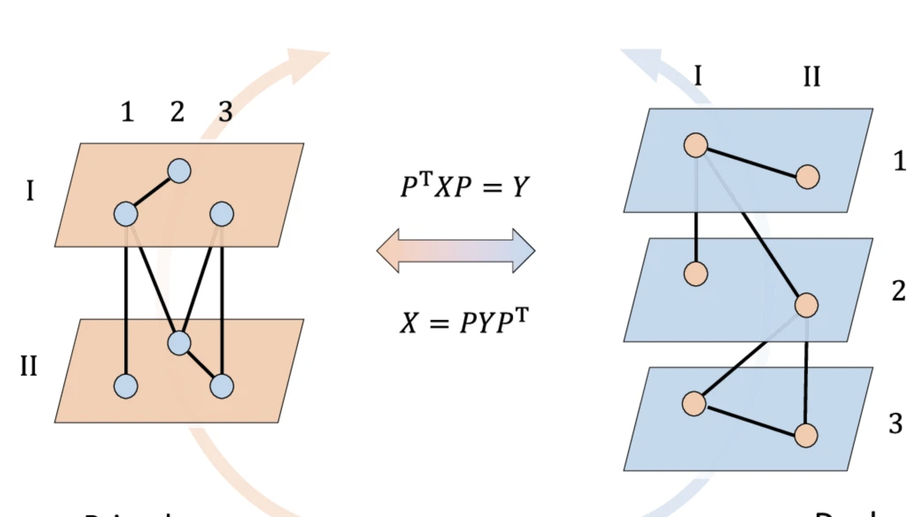

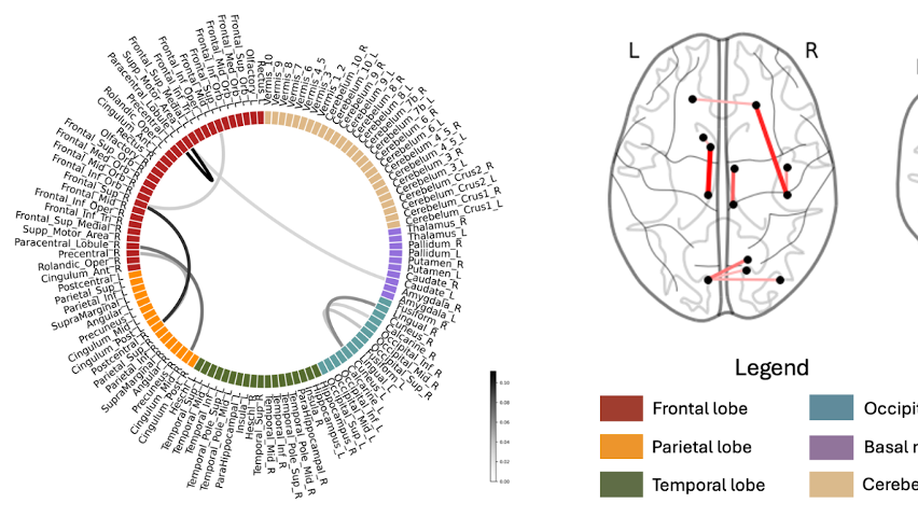

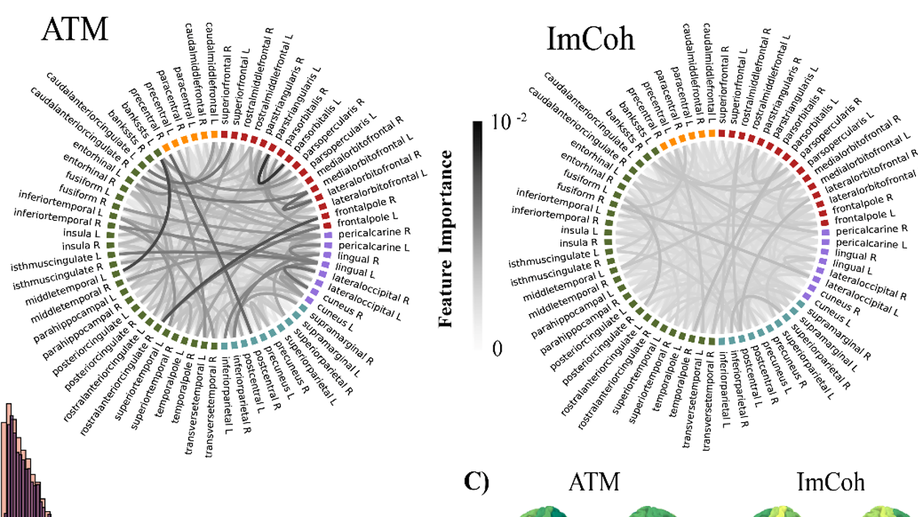

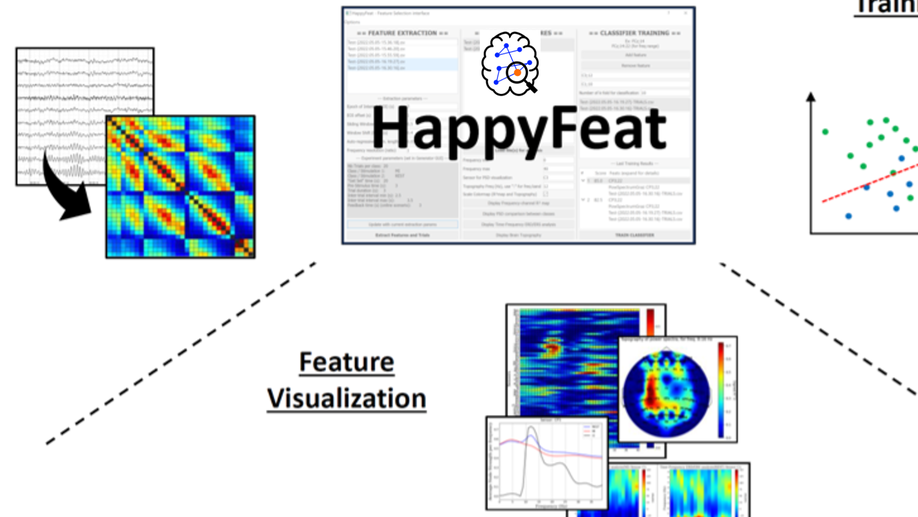

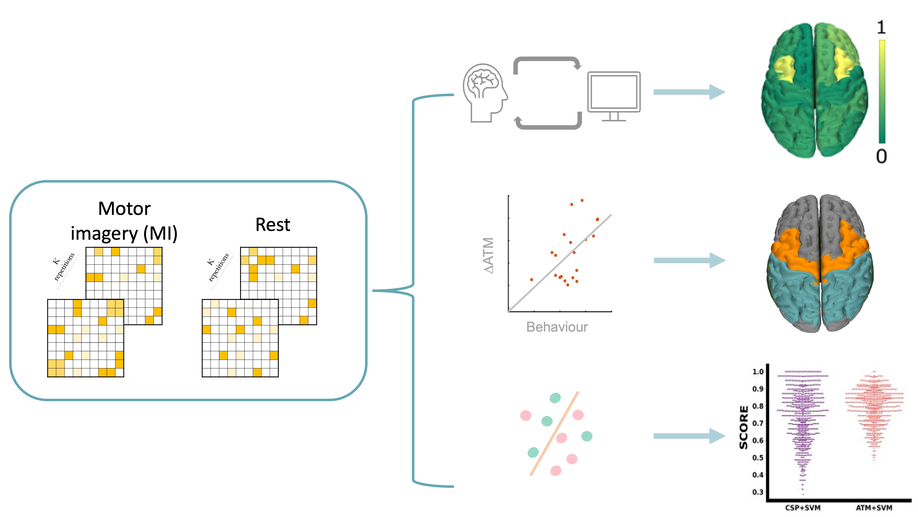

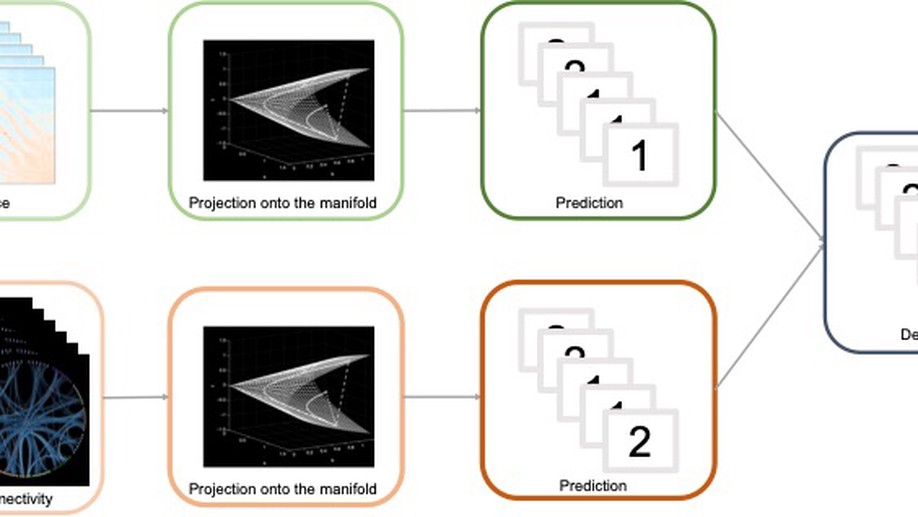

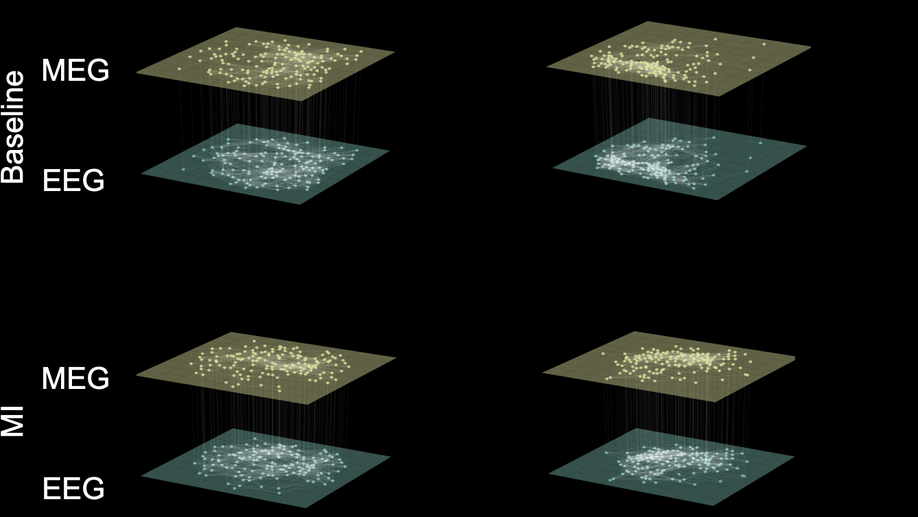

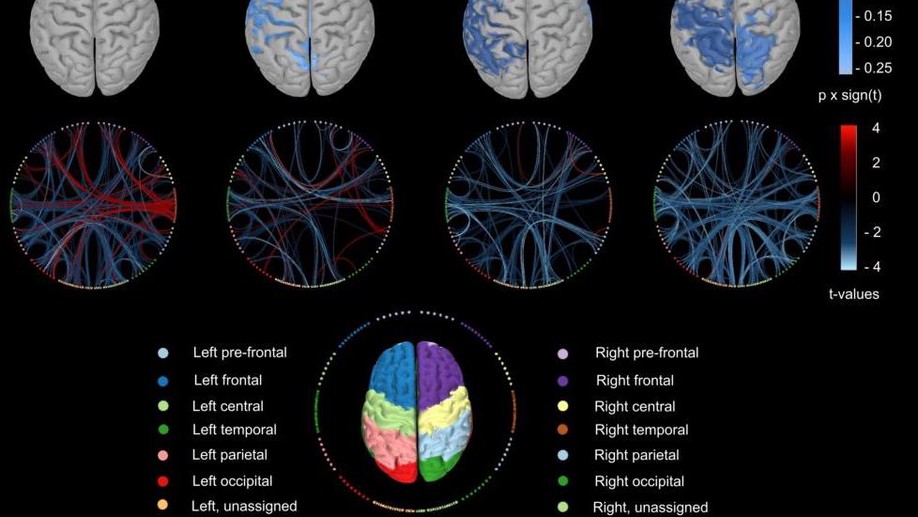

Background and Objectives: Amyotrophic lateral sclerosis (ALS) is a progressive neurodegenerative disorder that, beyond motor neuron loss, involves distributed cortical network dysfunction and marked clinical heterogeneity, motivating biologically grounded markers to track disease related network disruption. Neuronal avalanches provide a framework to probe nonstationary propagation. We introduced a novel functional connectivity metric derived from neuronal avalanches and adapted from the original avalanche transition matrix (ATM) by incorporating activation and avalanche duration: weighted stochastic ATM (wsATM). We hypothesized that embedding temporal persistence would improve robustness and sensitivity to ALS-related alterations, quantitatively linked to standardized clinical severity and staging. Methods: We tested this hypothesis on resting-state MEG source reconstructed data from 39 individuals with ALS and matched healthy controls. For each neuronal avalanche, we constructed a transition count matrix T, where each element Tij was incremented whenever region i was active at time t and region j at time t+1, thus capturing persistence through consecutive co-activations. Each matrix was then row-normalized to produce a row-stochastic transition probability matrix. Finally, these matrices were aggregated into a subject-level ws-ATM, with weights scaled according to the duration of each avalanche. Robustness was assessed by progressively removing avalanches and quantifying deviations from the full signal wsATM. For clinical relevance, we propose a concordance framework that (i) identifies large scale reorganization in ALS vs controls, (ii) tests whether individual propagation differences correlate with impairment (ALSFRS R/MiToS), and (iii) highlights edges whose between group changes align with within patient severity in a directionally consistent, clinically interpretable way. Results: wsATM converged earlier and stayed stable under substantial avalanche removal, with reduced inter subject dispersion versus ATM; effects persisted across most truncation levels (up to ~65% removal). In ALS, wsATM detected more altered edges than ATM (370 vs 74), revealing widespread changes with prominent frontal and fronto-motor involvement. Clinical associations strengthened, with greater overlap between ALScontrol edges and disability related edges (wsATM: 17/15 for ALSFRS R/MiToS vs ATM: 6/2), enriching frontal/fronto-motor nodes including superior frontal and precentral regions. Discussion: Our findings indicate that ws-ATM remains reliable with shorter recordings and after removing artifact-contaminated segments, an advantage for clinical diagnostic use. Moreover, it appears to better capture disease relevant network alterations, providing a biologically grounded set of features that could support ALS stratification.